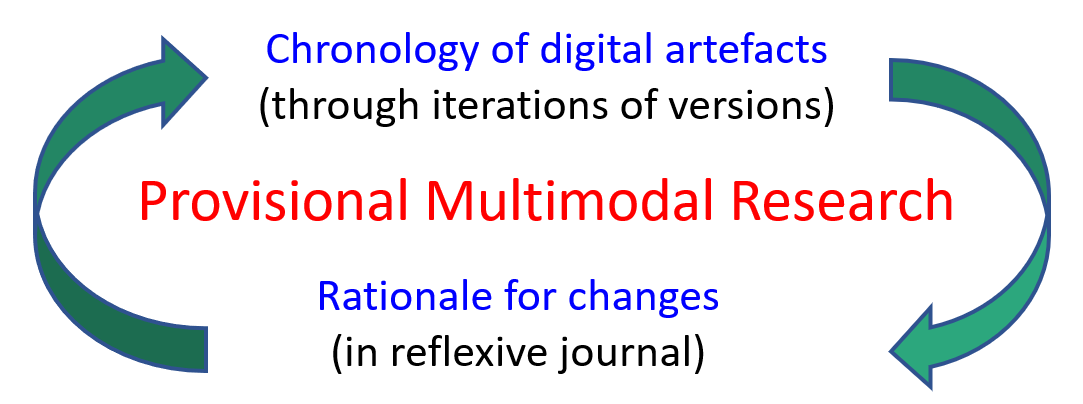

The research methodology for The SILO Project is Provisional Multimodal

Research (PMR). PMR is a research methodology designed for educators

to document the construction of digital artefacts (Jacobs, 2024). In

essence, PMR is simply archiving each version of digital artefacts

while keeping a reflexive journal to document the rationale for any

changes which are made. PMR "reflects a paradigmatic shift in

scholarly communication—a move from monomodality to multimodality, from

static representation to dynamic, embodied, and interactive

meaning-making" (Akmalia & Faizin, 2026, p. 1). Furthermore, "by

integrating digital devices and multimodal composition into pedagogy,

educators can foster more inclusive, creative, and participatory forms of

learning" (Akmalia & Faizin, 2026, p. 2). The main ideas involved in

PMR can be summarised as follows:

The chronology of digital artefacts and the rationale for changes are

mutually informative as shown in Figure 3.1.

The iterative nature of PMR means that the various pages of this website

change frequently because incremental improvements are actioned on a daily

basis. The SILO Project is built upon the following three premises:

Data sources

The relationship between the data sources is

shown in Figure 3.2.

Figure 3.2

Venn Diagram of the Data Sources

Co-construction

Another important methodological issue is

co-construction and how teachers and researchers understand their own

role as co-designers within the classroom. Much time and effort has gone

into cultivating a learning environment based on mutual trust and

respect to encourage the free flow of ideas in a spirit of

collaboration. As yet, there have been no differences of opinion

regarding implementation but the following three protocols are proposed

to manage such instances:

- Ultimately, it is the classroom teacher who has the final say about

what happens as it their classroom as they have a duty of care for

everything which occurs.

- If the researcher suggests an activity which is unfamiliar to the

classroom teachers (such as coding micro:bits), the researcher will

run the session so that the classroom teacher can observe without

having to invest any additional preparation time

- If two or more classroom teachers within the same year level have a

difference of opinion in relation to classroom activities, each

teacher will remain free to pursue their chosen option. Such instances

are likely to be generative as, "It is through understanding the

recursive patterns of researchers’ framing questions, developing

goals, implementing interventions, and analyzing resultant activity

that knowledge is produced" (Barab & Squire, 2004, p. 10).

Teachers make countless decisions every day

but the decision-making process which guides such decisions is rarely

articulated because it is tacit knowledge. Figure 3.3 seeks to make this

tacit knowledge visible in the context of STEM education.

Figure 3.3

A Decision-Making Tool for STEM Education

Figure 3.3 is largely based on common sense

and professional judgement but a simple tool like this brings some

larger issues into focus such as relevance and suitability. It also

shows teachers where they might need to expand their skills, knowledge

or resources.

Assessment

As teachers, we are familiar with the

various types of assessment shown in Figure 3.4.

Figure 3.4

An Overview of Assessment Types

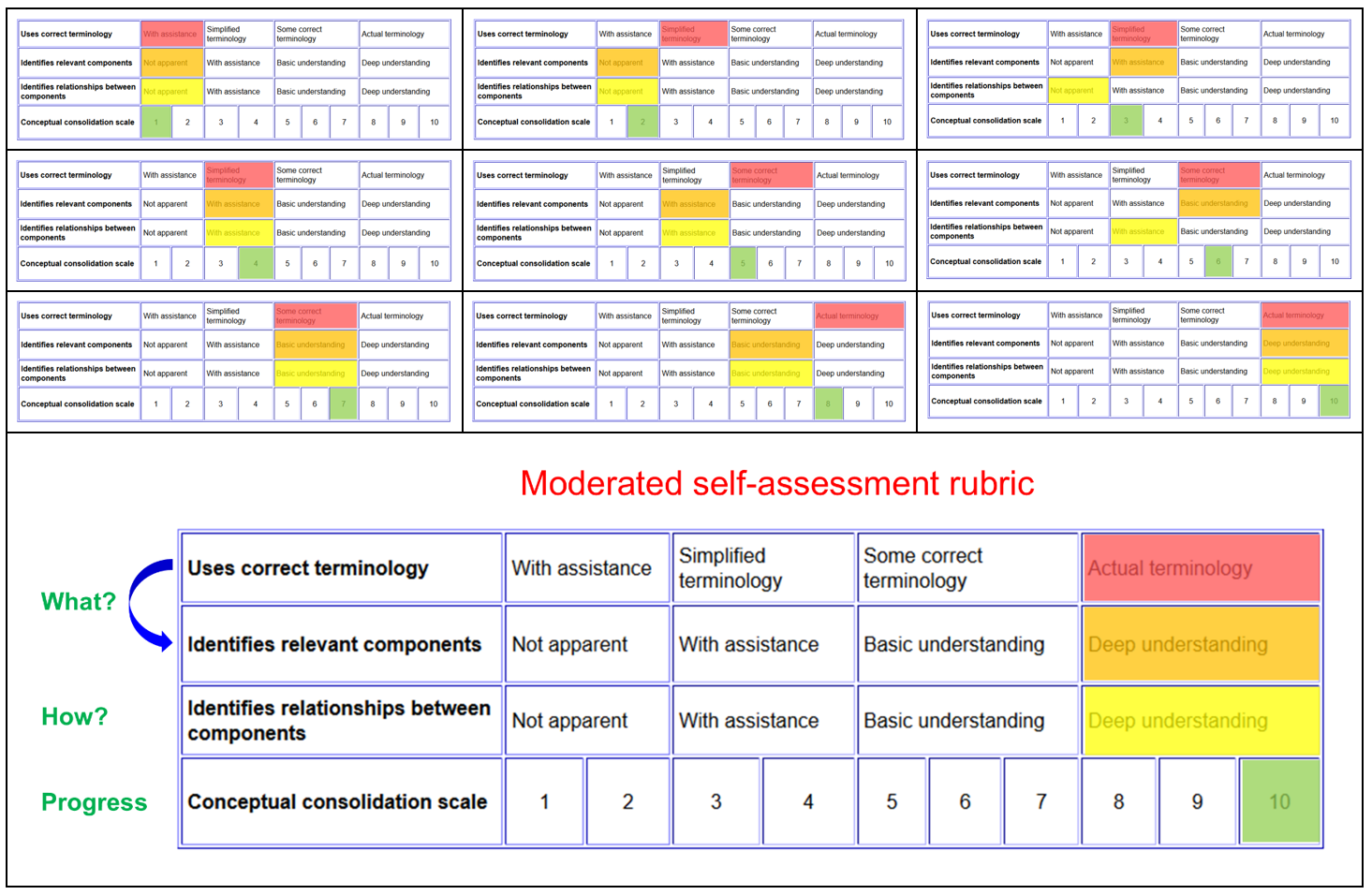

At the bottom of each of

The

28 STEM units is the following rubric as shown in Figure 3.5.

Figure 3.5

A Rubric for Moderating Self-Assessments

As noted by Jacobs and Cripps Clark (2018),

progress through this rubric occurs from top to bottom. The implications

for this phenomenon are as follows:

- Initial research for a conceptual topic begins by first

identifying, and then using, correct terminology.

- An eventual outcome of investigating correct terminology is the

identification of relevant components.

- The pinnacle of conceptual consolidation involves understanding

the dynamic relationships that exist between the different

components.

- Conceptual consolidation itself must be understood on a

case-by-case basis because, regardless of any similarities, every

concept is different (Jacobs & Cripps Clark, 2018, p. 47).

Figure 3.6 is static composite of the

animated GIF in Figure 3.5 so you can see the progression without being

distracted by the movement.

Figure 3.6

Static Composite of the Moderated

Self-Assessment Rubric

The

following paragraph provides an example of how the rubric can be used

to benchmark student achievement for a particular topic. It is taken

from SILO 2.3 'Fair tests'.

A student: (1)

defines a variable; (2) outlines the

requirements of a fair test; (3) can

discuss the requirements of a fair test from memory in any order; (4) can explain why the independent

variable is the focus of an experiment; (5)

can measure the dependent variable and relate this measurement to the

independent variable; (6) can

explain why an experiment is only a fair test if the control

variable(s) can be kept constant; (7)

designs their own fair test; (8) writes

instructions for their fair test with clearly defined variables; (9) formulates a hypothesis for their

fair test; (10) can explain the

relationship between fair tests and hypotheses.

This work is licensed under a Creative

Commons Attribution-NonCommercial-ShareAlike 4.0 International License